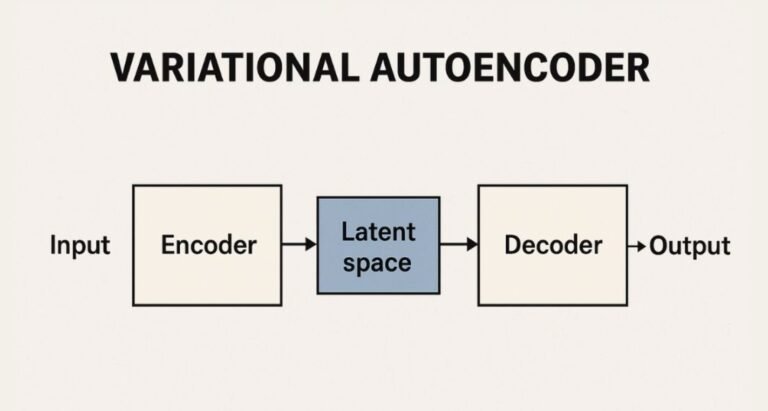

Imagine you are in a vast art museum. The museum holds millions of paintings, each with its own colors, brush strokes, and moods. Now imagine a skilled curator who can walk through this gigantic hall, observe every detail, and compress the essence of each painting into a single poetic line. Later, a talented artist uses that poetic line to recreate a full painting that closely resembles the original. This is the spirit of a Variational Autoencoder (VAE): a system that learns to compress and reimagine data, capturing patterns hidden beneath the surface.

VAEs are not merely compression tools. They are storytellers that represent complex data through a structured inner world called the latent space. This inner world allows the model to generate new data that feels familiar yet original. Instead of just copying, VAEs learn the underlying tone, rhythm, and meaning of data patterns. That capability makes them powerful engines for generative modeling.

The Core Idea Behind VAEs

At its heart, a VAE is designed to encode data into a compact representation and then decode that representation back to something close to the original. But unlike basic compression, VAEs are probabilistic. They do not store a single fixed summary. Instead, they learn a distribution of possible summaries, allowing variations and creativity.

This is what separates a VAE from simpler autoencoders. A traditional autoencoder may learn to store a fingerprint of the data. A VAE, however, acknowledges that art, speech, faces, and handwritten letters all come with natural variations. It preserves the possibility of different outcomes rather than a single rigid description. This makes VAEs ideal for tasks like image synthesis, speech generation, drug molecule creation, and more.

The Encoder: Learning the Essence

The encoder acts like the museum curator. It examines each input sample and extracts what matters. But it does not produce just one compressed value. It estimates two key parameters of a probability distribution: a mean and a variance. These parameters define how the compressed representation should vary.

For example, when encoding handwritten digits, the encoder may learn that a certain group of shapes represents a style of writing the number 2, but it will also learn how much that style tends to change from one handwriting sample to another. The encoder therefore becomes both analytical and flexible. It captures patterns while respecting natural diversity.

This flexibility gives VAEs their generative power. It allows the model to sample new points within this distribution and meaningfully reconstruct them.

One might encounter this topic in advanced modules of an AI course in Pune, where learners explore how generative models manage uncertainty and variability in data. Such study deepens understanding of how machine learning can create instead of merely classify.

Latent Space: The Hidden World of Meaning

Latent space is the secret room where meaning is stored. Each encoded point in this space is a distilled representation of an input sample. Similar samples are grouped near each other. Dissimilar ones drift apart. Latent space works like a globe of ideas. Move a little on the map, and you see gentle transitions. Move far, and you find completely new expressions.

For images, shifting in latent space may slowly change the angle of a face, the curl of a smile, or the lighting pattern. This smooth navigability is a defining strength of VAEs. It allows gradual interpolation between different states. Imagine morphing a cat into a lion, step by step, without sudden distortions. VAEs make such transformations possible because latent space is continuous and meaningful.

The Decoder: Recreating the Story

The decoder is the artist who paints from the poetic summary. It takes a point from latent space and reconstructs it into a full data sample. Its challenge is to create an output that is recognizable while honoring the variability learned by the encoder. The decoder learns through feedback: comparing its output to the original and adjusting until the reconstructions become convincing.

But reconstruction alone is not enough. VAEs introduce a special loss function that balances two goals:

- Make reconstructions accurate.

- Make the latent space well-behaved and continuous.

This balance prevents the model from memorizing data and encourages it to generalize. By maintaining structure, the VAE ensures that every point in latent space has meaningful output potential.

Understanding such training dynamics is often emphasized in advanced practice sessions of an AI course in Pune, where learners work on hands-on experiments to visualize latent spaces and tune generative performance.

Applications: Where VAEs Make a Difference

VAEs are widely used in creative and scientific fields.

- Image Generation: Creating new fashion designs, avatars, or animation frames.

- Medical Imaging: Enhancing or reconstructing scanned images from noisy data.

- Speech and Audio Synthesis: Designing natural-sounding voices and music textures.

- Drug Discovery: Generating molecular structures that could serve as pharmaceuticals.

In each case, the VAE helps convert raw data into a structured imagination space that enables controlled innovation.

Conclusion

Variational Autoencoders are much more than mathematical systems. They are explorers of hidden structure and creators of new possibilities. By capturing not only what data is but how it varies, VAEs open doors to new generative horizons. Their latent space becomes a playground where creativity and precision coexist.

From art-inspired generation to scientific breakthroughs, VAEs offer a powerful lens to understand and reshape the world of data. They remind us that behind every complex shape or sound lies a pattern waiting to be discovered, compressed, explored, and reimagined.